Agentic AI in Government: Adoption, Governance Challenges, and Oversight Gaps

Over half of government agencies are adopting Agentic AI, yet face challenges with fragmented systems and inadequate governance. A survey reveals gaps in oversight, human control ('kill button'), and vendor accountability, stressing the critical need for integrated frameworks and robust policy implementation.

” Agentic AI has actually most definitely entered the chat. Over half of those we evaluated are presently in the planning stages or have actually proactively released a pilot effort,” said Aaron Heffron, president of Insights and Research Study at GovExec. “The main question stays, nonetheless, if the current facilities, both technological and human, is up to the task.”

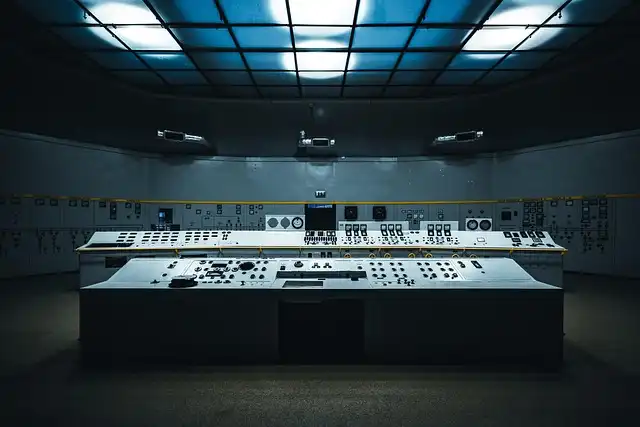

“The current atmosphere of fragmented, siloed systems and detached operations only boosts intricacy and hinders adoption,” Alboum claimed. “To move forward, AI adoption need to concentrate on bringing everything together in an AI control tower to make sure that policies can be used, controls enforced, and results provided efficiently across the company.”

Even as firms race towards agentic AI, respondents recognized possible obstacles to fostering in the types of inadequate oversight policies and variation between the viewed necessity of governance frameworks and their execution. The searchings for were launched today by Market Links in behalf of ServiceNow.

Government AI Adoption Rates

According to a March study of greater than 200 technology executives throughout federal government, majority (53%) stated their agencies are discovering agentic AI or proactively intending pilots of the innovation. One more 15% are presently implementing agentic AI systems or have actually entirely done so currently, compared to 6% that claimed they were not yet thinking about agentic AI.

Ensuring Human Control & Accountability

“Federal leaders state they desire human control over high-risk AI– yet less than a third have a kill button to implement it, leaving a hazardous void between intent and capacity where trust fund is won or shed,” the report states. Integrated responsibility is no much longer optional– it is the requirement for any type of company serious concerning releasing agentic AI at objective rate.”

To guarantee responsibility for agentic AI solutions, 84% of participants stated their companies had actually recorded escalation plans, while 78% had structured post-incident evaluation processes. Fewer than fifty percent (44%) said their agencies consisted of obligation or duty provisions for AI vendors in contracts, and fewer than one-third (29%) had actually recorded “eliminate button” treatments.

“One of the huge questions that you need to ask is, ‘Just how am I obtaining my data prepared for AI intake? That governance piece ends up being essential [to] making sure that your information within your company and your AI are interacting,” encouraged one unrevealed IT supervisor in his action.

What is Agentic AI?

Agentic AI is typically specified as autonomous systems with the ability of seeking intricate goals and thinking, with the ability to take independent activities throughout software program systems with marginal human oversight. Those agentic capacities to perform some tasks without human intervention have actually made it an appealing option, and it has been touted by several of Head of state Donald Trump’s top technology officials as a crucial method to do even more with less.

For national protection, critical infrastructure and emergency action information, 79% of participants claimed their companies mandated “human-in-the-loop” oversight, with authorization needed for every action executed by AI. For high-risk data, like benefits claims or company economic information, 78% of participants said their company calls for official human authorization before high-risk actions are taken by AI, yet not every action.

Oversight Gaps & AI Readiness

Research study findings suggested that while governance structures tended to do not have maturity, regular oversight was a near-universal requirement. Nearly 90% of respondents said they required logging and audit tracks for all activities, and greater than 80% needing computerized policy checks and guardrails.

Yet per the searchings for, not every agency awaits agentic AI, even as a growing variety of firms supply agentic remedies. Only 20% of participants said their firms have specified plans for pre-deployment screening or generic agentic AI usage, and just 8% have actually a defined structure for incident feedback. Also fewer (6%) have a structure for third-party or supplier governance.

“This research verifies what we’re hearing from firms daily– the cravings for agentic AI is actual, yet oversight hasn’t kept up,” stated Mike Pain, Global Vice Head Of State of U.S. Public Industry at ServiceNow. “Seventy-seven percent of government leaders claim oversight frameworks are necessary, yet fewer than a third have in fact implemented them. Agencies that construct responsibility into their AI process from the beginning, not as an afterthought, will be the ones providing solid results for people.”

“This research confirms what we’re listening to from firms every day– the appetite for agentic AI is real, however oversight hasn’t kept pace,” said Mike Hurt, Global Vice Head Of State of U.S. Public Industry at ServiceNow. Per the findings, not every firm is ready for agentic AI, also as an expanding number of companies offer agentic remedies. Only 20% of respondents stated their agencies have defined policies for pre-deployment testing or generic agentic AI use, and just 8% have a specified framework for incident reaction.” Agentic AI has definitely gone into the chat. Built-in accountability is no longer optional– it is the requirement for any firm severe concerning deploying agentic AI at mission speed.”

1 Agentic AI2 AI accountability

3 AI governance frameworks

4 Government AI adoption

5 Human-in-the-loop

6 Oversight policies

« Austria Expels Russian Diplomats Amid Espionage Concerns